The Baseline: What Every Report Must Include

If a report just says "we removed 10,000 accounts," it's useless. To actually fight trafficking, we need to see the *why* and *how*. A useful report starts with raw volume but quickly moves into specific categories of abuse. We need to see the number of accounts banned specifically for suspected non-consensual content or suspected coercion. Real accountability comes from seeing the "detection rate." If a platform only removes content after a user reports it, they are reactive. If they are using proactive tools to find patterns of trafficking, they are actually fighting the problem. We should be looking for the ratio of proactively detected violations versus user-reported ones. If the proactive number is low, the platform is essentially letting the community do the police work while traffickers keep uploading.Tracking the Digital Footprint of Trafficking

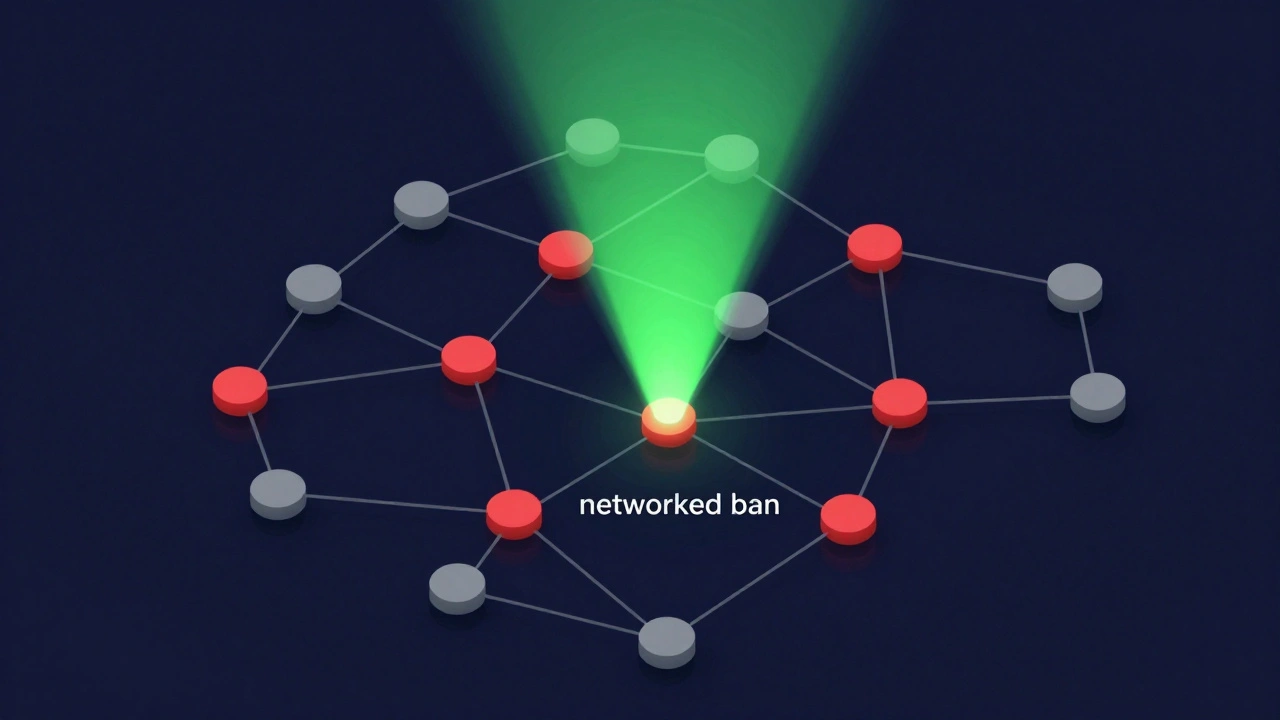

Trafficking isn't always obvious. It often hides in the metadata and behavioral patterns. This is where Behavioral Analysis is the process of identifying suspicious patterns, such as multiple accounts uploading similar content from the same IP address or device ID comes into play. A transparency report should disclose how many "clusters" of accounts were banned. When a trafficker operates, they rarely use one account. They run a network. If a report shows a spike in "networked bans," it means the platform is successfully identifying the infrastructure of a trafficking ring rather than just playing whack-a-mole with individual profiles. We also need to see data on "re-offender rates." How often do banned traffickers return under new aliases? If the number is high, the platform's identity verification is failing.The Role of Identity Verification and KYC

One of the strongest shields against trafficking is a rigorous KYC (Know Your Customer) process, which requires users to provide government-issued identification to verify their age and identity before uploading content . But KYC isn't a magic bullet. Traffickers often use stolen IDs or coerce victims into providing their own documents. Transparency reports should tell us how many IDs were flagged as fraudulent. If a site says 100% of users are verified but doesn't report the number of failed verification attempts, they're hiding the scale of the attack. We need to see the failure rates. A high failure rate in a specific region might actually be a positive sign-it means the system is catching bad actors before they ever get a profile live.| Metric | What it tells us | Red Flag Value |

|---|---|---|

| Proactive Detection Rate | How much is found by AI vs. Users | Below 50% proactive |

| Account Cluster Bans | Whether rings or individuals are targeted | Zero mention of "clusters" |

| ID Verification Failure Rate | The volume of attempted fraud | Unreported or "0%" failure |

| Law Enforcement Requests | Cooperation with global authorities | Low volume despite high traffic |

Cooperation with Law Enforcement and NGOs

No platform can stop trafficking alone. They need to work with Interpol and the National Center for Missing & Exploited Children (NCMEC). A transparency report should explicitly state how many reports were filed with these agencies. It’s not just about the number of reports, but the *turnaround time*. If a platform receives a high-priority request regarding a missing person, how many hours does it take to freeze the account and preserve the data? When reports list an average response time of 24-48 hours for emergency requests, it shows a functional pipeline. If that data is missing, the platform is likely treating trafficking reports like standard copyright complaints.The Danger of "Vanity Metrics"

Many platforms try to distract us with vanity metrics. They might brag about "millions of images scanned." That sounds impressive, but it's meaningless without context. Scanning an image is easy; correctly identifying a victim of coercion is hard. We should look for "precision and recall" data. Precision tells us how often the AI was right about a ban. Recall tells us how much of the total bad content they actually caught. If a platform has high precision but low recall, they are playing it safe-only banning the most obvious cases while letting the subtle, more dangerous trafficking patterns slip through the cracks.

Moving Toward a Global Standard

Right now, every adult platform does things their own way. Some use a simple blog post, others use a complex dashboard. We need a standardized framework, similar to how GDPR (General Data Protection Regulation) standardized data privacy in Europe. Standardization would allow us to compare platforms. If Site A removes 5% of its content for trafficking and Site B removes 0.1%, we can ask why. Is Site B cleaner, or are they just worse at finding the bad guys? Without a common set of metrics, these reports are just marketing brochures. We need a "Trafficking Prevention Index" that scores platforms based on their transparency, their proactive detection, and their speed of cooperation with authorities.Why aren't these reports mandatory for all platforms?

Many platforms argue that disclosing their detection methods gives traffickers a roadmap to bypass their security. While that's a valid concern, there is a middle ground. They don't have to reveal the exact code of their AI, but they must reveal the outcomes-how many accounts were banned and why. Legislation like the EU's Digital Services Act (DSA) is starting to make this mandatory for larger platforms to ensure they aren't ignoring systemic risks.

Can AI completely replace human moderators in finding trafficking?

No. AI is great for spotting known hashes of illegal content or flagging suspicious login patterns, but it struggles with context. For example, AI might not realize a creator is being coerced if the video looks professional and the creator is smiling. Human moderators with expertise in trafficking indicators are essential to review the flags AI generates and make the final call.

What are the biggest red flags in a transparency report?

The biggest red flags are vague language (e.g., "we take safety seriously" without data), a lack of breakdown by violation type, and a total absence of information regarding law enforcement cooperation. If a report focuses only on "spam" or "copyright" but ignores "coercion" and "trafficking," it's likely the platform is ignoring the most serious crimes.

How does KYC actually stop traffickers?

KYC raises the cost of doing business for traffickers. When a platform requires a verified government ID linked to a bank account, a trafficker can't just create 1,000 fake accounts in an afternoon. It forces them to either steal identities (which leaves a trail) or use a limited number of accounts, making it much easier for behavioral analysis tools to spot the patterns and ban the entire network at once.

Where can I find these reports for a specific site?

Most reputable platforms place these in their "Trust & Safety," "Legal," or "About" sections. If you can't find a "Transparency Report" or "Safety Report" in the footer of the website, the company likely isn't tracking this data publicly, which is a sign of low accountability.