Hosting a high-traffic adult video site is a financial nightmare if you just throw servers at the problem. Between massive 4K files and unpredictable spikes in viewership, your cloud bill can easily eat your entire profit margin before you even realize where the leak is. The real trick isn't just finding a cheaper provider; it's building an architecture that stops you from paying for resources you aren't actually using.

Key Takeaways for Platform Owners

- Stop serving raw files from your primary origin server; it's a recipe for bankruptcy.

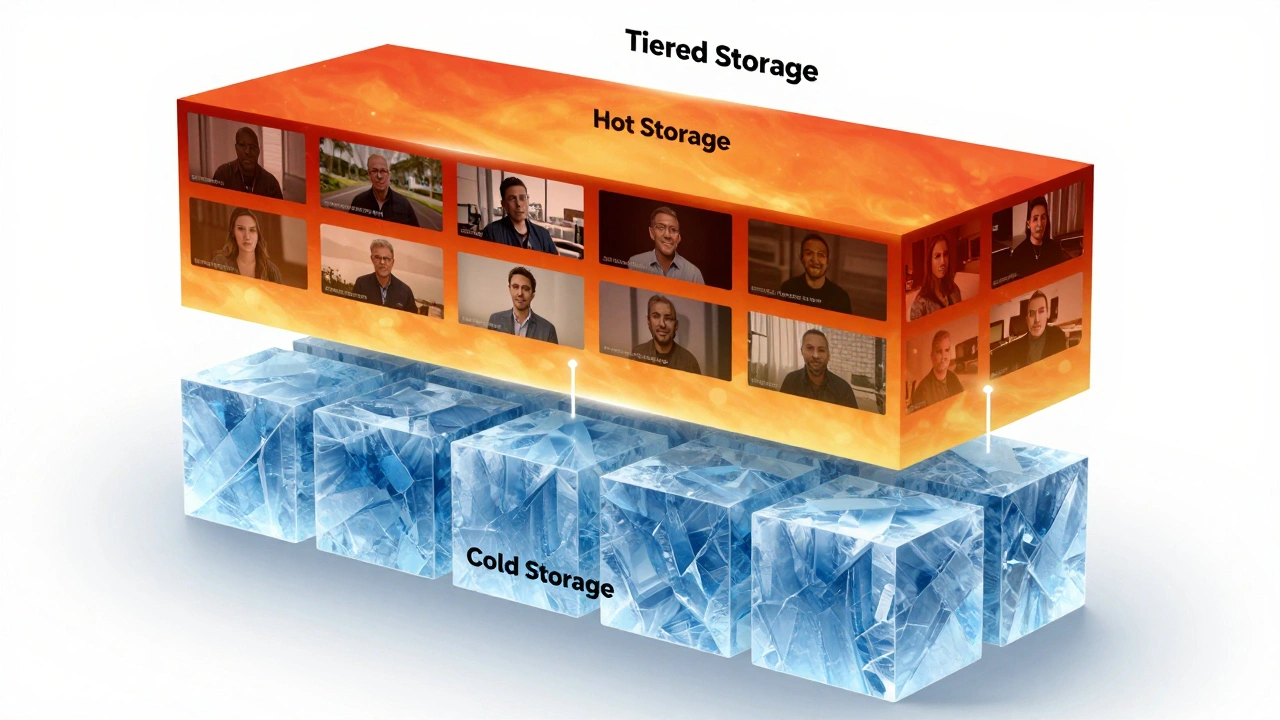

- Use tiered storage based on video popularity (hot vs. cold storage).

- Implement aggressive caching at the edge to reduce egress fees.

- Automate scaling so you aren't paying for idle capacity during low-traffic hours.

The Egress Trap and Why CDNs are Non-Negotiable

If you're serving video directly from a cloud bucket, you're likely paying a fortune in egress fees-the cost of moving data out of the cloud provider's network. For a platform moving petabytes of data, these fees are the biggest line item on the bill. This is where a Content Delivery Network a distributed group of servers that cache content close to end-users (CDN) becomes your best friend.

Instead of every single user request hitting your origin server, the CDN handles the heavy lifting. By caching a video segment at an edge location in Tokyo or Berlin, you only pay the egress fee from your origin to the CDN once, rather than once for every single viewer. If you use a provider like Cloudflare or Akamai, you can significantly lower these costs by increasing your "cache hit ratio." If 95% of your requests are served by the CDN, your origin server costs plummet.

| Method | Cost Profile | Performance | Scalability |

|---|---|---|---|

| Direct Origin Download | Extremely High (Egress) | Slow (High Latency) | Poor |

| Standard CDN | Medium (Usage-based) | Fast (Edge Cached) | Excellent |

| Multi-CDN Strategy | Optimized (Arbitrage) | Fastest | Infinite |

Smart Storage: Stop Paying for "Hot" Data That's Cold

Not every video on your platform is a hit. A small percentage of your content drives the vast majority of your traffic, while thousands of older videos are rarely watched. If you keep everything in high-performance Object Storage a data storage architecture that manages data as objects, typically used for unstructured data like video (like AWS S3 Standard), you're wasting money.

The smart move is to implement a lifecycle policy. Move videos that haven't been accessed in 30 days to a lower-cost tier, such as S3 Intelligent-Tiering or Glacier Instant Retrieval. By shifting a video from a "Hot" tier (roughly $0.023 per GB) to a "Cold" tier (roughly $0.004 per GB), you reduce storage costs by over 80% for that specific file. The key is to ensure the transition is automatic so your engineers aren't manually moving files around.

Compute Efficiency: Spot Instances and Serverless

Video transcoding-the process of turning a raw upload into multiple resolutions (1080p, 720p, 480p)-is CPU-intensive. If you run a dedicated cluster of servers 24/7 for this, you're paying for idle time. Instead, leverage Spot Instances unused cloud compute capacity that is available at a significant discount compared to on-demand pricing.

Since transcoding is an asynchronous task (the user doesn't need the video to be ready the exact second they hit upload), you can use Spot Instances to do the heavy lifting. They are often 70-90% cheaper than standard instances. The only catch is that the cloud provider can reclaim them with short notice. To handle this, use a queue system like Amazon SQS. If a server disappears mid-job, the message simply goes back into the queue and another Spot Instance picks it up. This turns a potentially expensive operation into a low-cost background process.

Database Optimization and Caching Layers

Your database shouldn't be doing the heavy lifting of serving metadata for every single page load. Every time a user refreshes a gallery, hitting your primary database to fetch video titles and tags costs money in terms of I/O and CPU. To fix this, introduce a caching layer using Redis an open-source, in-memory data structure store used as a database, cache, and message broker.

By storing the most requested metadata in memory, you reduce the load on your primary database, allowing you to downsize your database instance to a cheaper tier. If you're using a managed service like Amazon RDS, you'll notice that reducing the IOPS (Input/Output Operations Per Second) requirements through caching can shave hundreds of dollars off your monthly bill. Ask yourself: does this piece of data really need to be fetched from the disk every time, or can it live in RAM for ten minutes?

Architecting for the Future: Multi-Cloud and Hybrid Strategies

Relying on a single cloud provider is not only a risk for your uptime but also a financial mistake. Different providers have different strengths. You might find that one provider has cheaper object storage, while another offers a more aggressive CDN pricing model. This is where a hybrid approach comes in.

Some platforms use a "Cloud Bursting" model. They keep their baseline traffic on cheaper, dedicated bare-metal servers (like those from Hetzner or OVH) and only "burst" into AWS or Google Cloud Platform when traffic spikes during a major event or holiday. This prevents the "cloud tax" from applying to your 24/7 baseline load while still giving you the elasticity of the cloud when you actually need it.

What is the most expensive part of running a video platform?

For almost every adult video platform, bandwidth egress is the biggest cost. When petabytes of data leave the cloud provider's network to reach the end user, the costs compound quickly. This is why implementing a robust CDN strategy is the first and most critical step in cost optimization.

How do I handle transcoding without breaking the bank?

The most efficient way is using Spot Instances coupled with a message queue. Because transcoding is not a real-time requirement, you can bid for unused compute capacity at a fraction of the normal cost. If the instance is reclaimed, the queue ensures the job is eventually finished by another instance.

Is serverless a good option for video processing?

Serverless functions (like AWS Lambda) are great for small tasks, such as generating thumbnails or updating database records. However, for full video transcoding, they are usually too expensive and have time-out limits that make them impractical. Stick to containers or VMs for the actual video processing.

How often should I move files to cold storage?

A common rule of thumb is the 30-day mark. If a video hasn't been requested in 30 days, its likelihood of being a "viral" hit again drops significantly. Moving it to a cooler tier reduces costs immediately, while "Instant Retrieval" options ensure that if someone does find the old video, there's no noticeable delay in playback.

Can I avoid CDN costs entirely?

Technically yes, but you'll pay for it in user experience and origin egress fees. Serving files directly from your server will lead to slow buffering for global users and massive bills from your cloud provider. A CDN is an investment that pays for itself by slashing the egress fees you'd otherwise pay to the cloud giant.

Next Steps for Your Infrastructure

If you're currently staring at a massive cloud bill, don't try to fix everything at once. Start by auditing your egress logs to see where the data is going. If your egress costs are high, prioritize the CDN setup. Once the "bleeding" is stopped, look at your storage buckets and implement a simple 30-day lifecycle policy. Finally, move your transcoding pipeline to Spot Instances. Small, incremental changes in architecture lead to the biggest savings in the long run.