The internet is currently facing a critical juncture where access meets responsibility. With stricter regulations emerging globally, platforms hosting adult content are under immense pressure to prove users are of legal age. You cannot rely on just a checkbox anymore. Governments are demanding proof, and technology is stepping in to fill that gap. This shift brings powerful tools to the table, but it also opens Pandora’s box regarding privacy and data security.

How AI Age Verification Works Today

When we talk about verifying age in digital spaces, we aren’t discussing simple birthdate forms. Those days ended years ago. Now, systems rely on Artificial Intelligence is a branch of computer science focused on creating systems capable of performing tasks that typically require human intelligence. In the context of safety, this tech analyzes visual data to confirm identity. There are three main methods currently dominating the market.

Document Scanning: This involves uploading a government-issued ID. The AI reads the hologram, checks the MRZ (Machine Readable Zone), and verifies the photo matches the selfie. It is fast, but it requires handing over your most sensitive physical document to a private company.

Liveness Detection: To stop someone from just holding up a photo of a person, the system asks you to blink, turn your head, or smile. This proves a real human is present. However, sophisticated attackers are getting better at spoofing these tests using deepfake video feeds.

Digital ID Wallets: Newer solutions pull data directly from secure government databases via app integration without ever sending an image of your ID card to the website. This reduces exposure, but it requires massive infrastructure support that many smaller platforms lack.

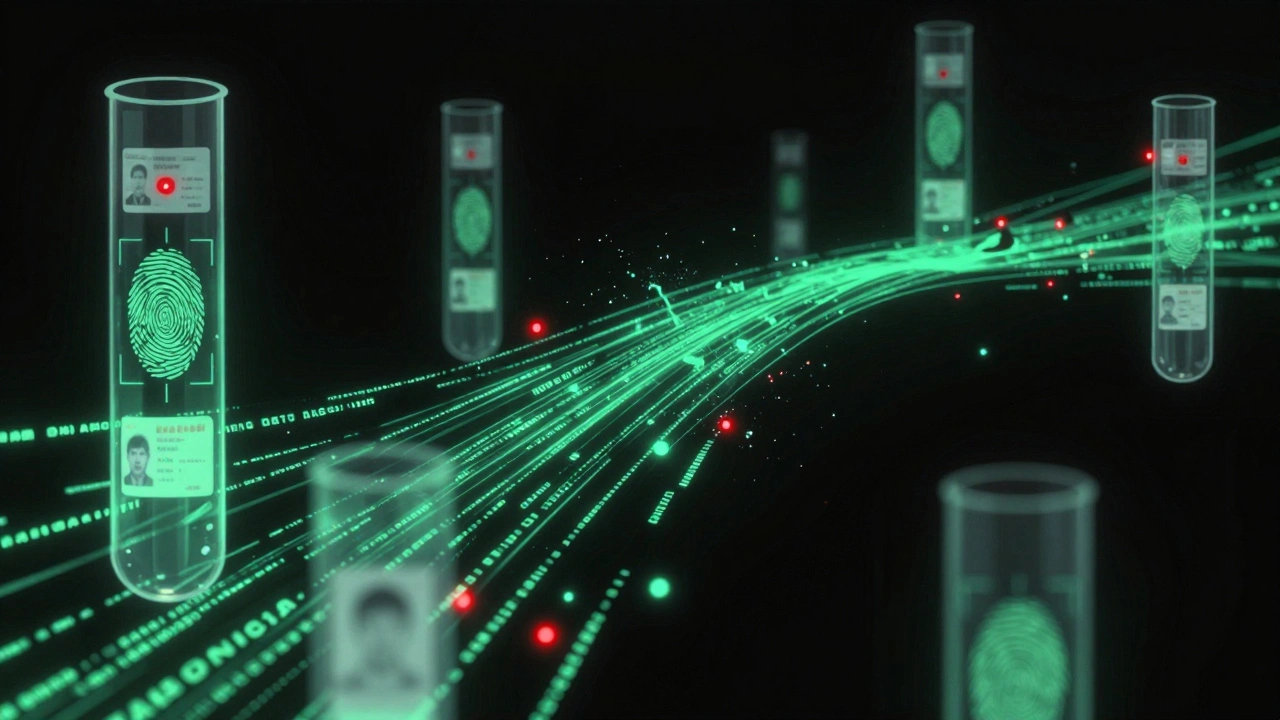

These technologies work together. A robust system doesn't just look at a passport; it cross-references the document with a live selfie and sometimes even payment history. The goal is to create a unique fingerprint of identity that is hard to forge.

| Method | Accuracy Rate | Privacy Risk | User Friction |

|---|---|---|---|

| Document Scan | High | Very High | Medium |

| Facial Liveness | Medium-High | High | Low |

| Digital ID Wallet | Very High | Low | High |

The Reality of Accuracy Rates

Vendors promise near-perfect accuracy, but the real world is messy. In late 2025 testing cycles, third-party audits showed significant variance between different software providers. Some claimed 99% success, while others sat closer to 85%. That 14% gap matters when millions of users are signing up.

A major source of error isn't just bad photos. It is often demographic bias. Older Facial Recognition Technology is a technology that maps features of a human face into a mathematical pattern. can struggle with non-white skin tones or faces with aging markers compared to younger profiles trained on western datasets. If the algorithm flags an actual adult as a minor incorrectly, the platform loses revenue and the user gets locked out unfairly.

Conversely, false negatives are dangerous. If the system allows a 15-year-old to pass verification by mistake, the platform faces severe legal penalties. Recent fines have reached seven figures for non-compliance. This puts site owners in a bind. They prefer overly strict systems that lock out legitimate users rather than lax systems that admit minors.

Evasion tactics are evolving too. We are seeing teenagers use generative AI to create synthetic identities. These fake profiles pass document checks because the AI creates a flawless, forged ID number. To combat this, verification engines now analyze metadata in images and check against global watchlists of known fraudsters.

Data Privacy and Security Risks

This is where things get tricky for everyone involved. When you submit a driver's license and a face scan, you are creating a biometric database. Biometric Data is digital information collected from the unique biological characteristics of a person. If that database gets breached, you can change your password or credit card number. You cannot change your face.

Many verification companies claim they delete this data immediately after confirming age. While true for some, others aggregate the data to train future models. Without clear transparency, users never know where their scans end up. Under recent privacy frameworks like the updated BIPA laws, storing this data requires explicit consent and rigorous storage encryption.

We saw breaches in 2024 and 2025 where millions of verified user profiles were leaked on dark web markets. Attackers used this to create "identity kits"-combining a stolen name, address, and face scan to bypass other banking verifications. Your age verification session might be the key that unlocks your bank account elsewhere.

Platforms are trying to move toward "Zero Knowledge Proof" architectures. This means the verification provider knows the user is 18+, but they do not keep the actual ID image. Instead, they send a cryptographic token back to the website. This drastically lowers risk but requires complex infrastructure setup.

Regulatory Pressure in 2026

As of early 2026, compliance is no longer optional for mainstream platforms. The landscape is shifting from self-regulation to statutory law. Several jurisdictions have mandated independent age gates before accessing NSFW content.

Legislation like the Age Appropriate Design Code is a set of standards requiring digital services to prioritize the safety of children. has forced tech giants to adopt centralized verification protocols. If you operate a platform in multiple regions, you face conflicting rules. One country might ban biometric scanning entirely due to human rights concerns, while another mandates it for all financial transactions.

Navigating this requires a flexible compliance engine. Hardcoding a single verification vendor limits your reach. Smart operators integrate multiple providers so they can switch logic based on the IP address of the visitor. If a user logs in from the EU, the system uses a privacy-preserving method. If they come from a region requiring document uploads, the system adjusts automatically.

Creators are also caught in the middle. Many platforms require *creators* to verify themselves first before they can upload content. This shifts the burden from the viewer to the uploader, which changes the power dynamic significantly. Independent artists worry this centralizes control over expression in the hands of big verification vendors.

Balancing User Experience with Safety

Adding heavy security layers kills conversion rates. Every step added to the sign-up flow drops your retention numbers. The challenge lies in balancing friction with safety. Most users expect a smooth experience. If they have to upload a license to view content, half will leave immediately.

Some sites are experimenting with browser-based signals. By analyzing device age (like having certain OS updates), credit card BINs, and ISP age estimates, they can infer probability without direct scanning. It is less accurate but much faster.

For high-risk categories, direct biometric verification remains the gold standard despite the friction. Users who care about safety tend to accept the trade-off for peace of mind. Knowing they aren't accidentally exposing their kids to harmful material drives adoption of stricter systems over time.

Moving Forward Safely

The trajectory is clear. Manual checks are disappearing, and automated AI verification is becoming mandatory infrastructure. As we settle into the norms of 2026, the focus shifts from just catching children to protecting adult users from misuse of their own data.

Users must demand transparency. Ask what happens to your data after the check ends. Platforms must publish their breach reports honestly. Regulators need to enforce uniform standards so vendors aren't cutting corners to win contracts. Until then, every click represents a gamble with personal privacy.

Frequently Asked Questions

What happens to my ID after I verify my age?

Ideally, the data is encrypted and deleted within 24 hours, leaving only a confirmation token. However, practices vary by provider. Always check the platform's privacy policy to see if they retain a copy of the original document scan for "compliance auditing."

Can AI detect fake IDs created by Deepfakes?

Modern systems use liveness detection to spot synthetic media. They analyze pixel artifacts and blood flow patterns (rPPG) that cameras can pick up. While highly effective, determined actors with high-end hardware can still occasionally bypass these filters.

Is facial recognition safer than uploading an ID card?

It depends on the backend. Face scans are permanent biometric data that cannot be reset if stolen. ID cards can sometimes be re-issued. However, if the scanner deletes the image immediately after verification, facial scan might offer less long-term exposure than a stored ID database.

Does age verification slow down website loading?

Yes, integrating external verification APIs adds latency. It usually takes 2-5 seconds extra per transaction. Browser caching helps, but repeated logins across different devices will trigger new verification challenges, increasing load times slightly.

Which countries require strict age verification?

By mid-2026, strict mandates exist in the UK, Ireland, and several US states like Texas and Florida. Global brands often implement global rollout to avoid maintaining separate regional versions of their sites, which means stricter rules often apply worldwide.