When you think about adult websites, you probably think about content - videos, images, chats. But behind every site that stays online, avoids legal trouble, and keeps users safe, there’s a team working in the shadows. These are the trust and safety teams - the unseen guards of adult platforms. They don’t get credit. They don’t appear in marketing videos. But if they fail, the whole site collapses.

What Trust and Safety Teams Actually Do

Most people assume these teams just delete explicit content. That’s not even close. Their job is layered, complex, and emotionally heavy. A trust and safety team at an adult site handles everything from identifying non-consensual material to stopping child exploitation, managing user reports, enforcing age verification, and even coordinating with law enforcement when needed.

These teams don’t just react - they predict. They analyze patterns. If a certain type of video starts appearing in high volume from one region, they trace it back to uploaders, check for coercion, and shut down the source before it goes viral. They don’t wait for complaints. They hunt.

At the core, they enforce policies that balance freedom of expression with real-world harm prevention. That’s not easy. One person’s consensual fantasy is another’s trauma trigger. The team has to know the difference - and they have to do it fast.

Key Roles Inside a Trust and Safety Team

These teams aren’t one-size-fits-all. The biggest adult platforms have specialized roles working in sync:

- Content Reviewers - The frontline. They view thousands of uploads daily, flagged by AI or users. They need training in trauma-informed moderation, legal definitions of obscenity, and cultural context. Many work in shifts, with mandatory mental health breaks.

- Policy Analysts - They write and update the rules. Not legal documents, but clear, actionable guidelines: "What counts as non-consensual?" "Is simulated underage content banned if the actors are over 18?" They work with lawyers, psychologists, and global compliance teams.

- Technical Moderators - They build and tweak the AI tools that flag content before humans see it. They train models to recognize specific patterns: facial expressions, body angles, clothing tags, even audio cues. These tools evolve constantly as uploaders find new ways to sneak through.

- Incident Responders - They handle urgent cases: live streams of abuse, threats of doxxing, coordinated harassment campaigns. They have direct contact with law enforcement and can freeze accounts or servers in minutes.

- User Experience Advocates - They bridge the gap between users and policy. If users keep reporting the same issue - say, fake accounts impersonating celebrities - this person pushes for system changes. They’re the voice of the community.

Each role requires different skills. A reviewer needs emotional resilience. A technical moderator needs coding fluency. But they all share one thing: they’ve seen things most people can’t unsee.

Metrics That Actually Matter

Most companies track how many posts they delete. That’s a vanity metric. Trust and safety teams track what actually protects people.

- Time-to-Remove - How long from when a report is filed until the content is gone. Top teams aim for under 15 minutes for high-risk content like non-consensual material.

- False Positive Rate - How often legitimate content gets wrongly removed. If it’s above 5%, users start losing trust. Teams use feedback loops: users can appeal, and reviewers recheck flagged items.

- Recidivism Rate - How often banned users come back under new accounts. Advanced teams use device fingerprinting, payment ID tracking, and behavioral analysis to catch repeat offenders.

- Report Resolution Rate - Not just how many reports are processed, but how many users say they’re satisfied with the outcome. Surveys are sent out after every resolved case.

- Internal Burnout Rate - This one’s rarely public, but it’s critical. Teams with high turnover have weaker enforcement. Leading platforms offer therapy, rotation schedules, and mandatory 3-week sabbaticals after 6 months of frontline work.

These metrics aren’t just numbers. They’re early warning signs. A spike in false positives? Maybe the AI model got overtrained on one type of content. A drop in report resolution? Users might be losing faith. Teams monitor these like a doctor watches vital signs.

Playbooks: How Teams Handle Real Incidents

Every team has a playbook - a step-by-step guide for handling common crises. These aren’t theoretical. They’re built from real events.

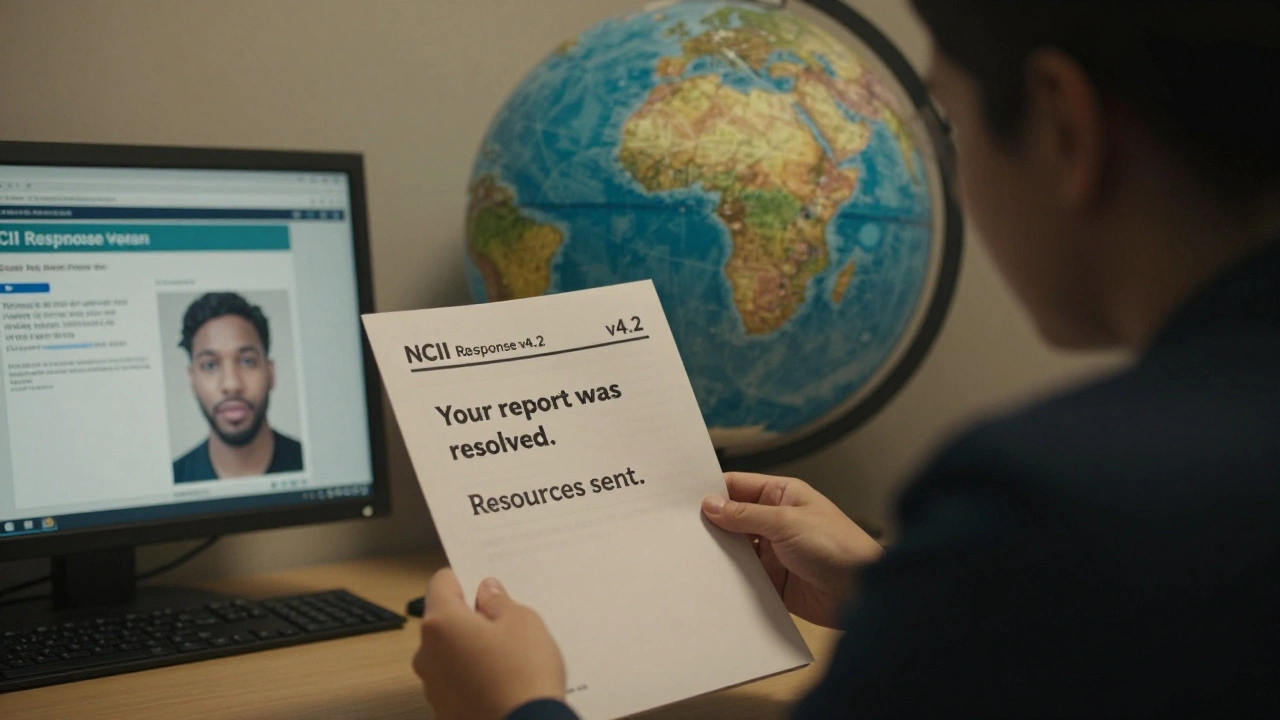

Playbook 1: Non-Consensual Intimate Imagery (NCII)

- Report received via app or email.

- AI flags based on facial recognition and metadata.

- Reviewer confirms identity match with verified victim database (from partner orgs like Without My Consent).

- Content removed within 10 minutes.

- Account permanently banned.

- IP and device hash added to global blacklist shared with 12+ adult platforms.

- Victim notified via secure channel with resources (counseling, legal aid).

Playbook 2: Coordinated Harassment Campaigns

- Multiple users report same target with identical abusive messages.

- Team checks for bot patterns: identical posting times, same language, fake accounts.

- Identifies origin: often a disgruntled ex or rival creator.

- Blocks all related accounts in one sweep.

- Contacts law enforcement if threats involve real-world harm.

- Issues public statement to community to prevent panic.

Playbook 3: Age Verification Bypass

- AI detects underage behavior: language, content preferences, login patterns.

- Secondary check: video call with live human verification (not just ID upload).

- If bypass confirmed, account deleted and payment method flagged.

- Parental control tools are offered to users who report minors.

- Platform updates verification tech within 48 hours.

These playbooks are updated quarterly. Teams test them with simulated attacks. If a playbook fails, it’s rewritten.

Why This All Matters - And Why It’s Underfunded

Adult sites make billions. Yet trust and safety teams are often the smallest, lowest-paid part of the company. Why? Because investors see them as a cost center, not a profit driver. But here’s the truth: without them, no platform survives.

In 2024, one major adult site collapsed after a single video of underage coercion went viral. They had no real-time detection system. No victim database. No incident playbook. The backlash was instant. Legal fines hit $18 million. Traffic dropped 80%. They never recovered.

Meanwhile, platforms with strong trust and safety teams - like those that invest in AI training, mental health support, and global collaboration - see higher user retention, fewer legal issues, and better brand loyalty. Users don’t care how sexy your content is. They care if they feel safe.

The Future: AI, Global Rules, and Human Oversight

The next five years will change everything. AI will get better at detecting harm - but so will bad actors. Deepfakes are already being used to fabricate non-consensual content. New laws in the EU and Canada will force platforms to prove they’re doing more than just deleting posts.

Expect:

- Real-time video analysis during live streams

- Global shared databases of banned users and devices

- Third-party audits of moderation practices

- Legal liability for companies that ignore clear patterns of abuse

The teams that survive won’t be the ones with the most AI. They’ll be the ones that treat their staff like essential workers - not expendable labor.

Do adult sites have to follow the same rules as social media platforms?

Legally, yes - especially in the U.S., EU, and Canada. Laws like FOSTA-SESTA and the EU’s Digital Services Act apply to all online platforms that host user content, regardless of niche. Adult sites can’t claim exemption just because their content is explicit. In fact, they’re held to higher standards because of the risk of exploitation. Failure to comply can lead to criminal charges against executives, not just fines.

How do trust and safety teams deal with cultural differences in content?

They use geo-specific policy layers. A video that’s legal in the U.S. might be banned in Germany or Japan. Teams maintain region-specific rule sets and adjust moderation based on user location. They also consult local NGOs and legal experts to avoid cultural bias. For example, some cultures view certain sexual acts as taboo even if they’re consensual - those are flagged differently than non-consensual acts. Context matters more than blanket bans.

Can users report false positives?

Absolutely. Every platform with a serious trust and safety team offers an appeal process. Users can submit evidence - like timestamps, consent forms, or proof of age - to have content reinstated. Teams review appeals manually. If a reviewer made a mistake, they’re retrained. If the system flagged incorrectly, the AI model is adjusted. Transparency builds trust.

Are there any organizations that help adult site teams?

Yes. Groups like the Internet Watch Foundation (IWF), Without My Consent, and the Cyber Civil Rights Initiative provide tools, databases, training, and legal guidance. Many adult platforms partner with them. The IWF’s hash database, for example, helps platforms instantly recognize known illegal content across borders. These aren’t activist groups - they’re technical and legal partners.

What happens if a trust and safety team makes a mistake?

Mistakes happen - deleting a consensual video, missing a new form of abuse, misidentifying a user. The key isn’t perfection - it’s accountability. Leading teams publish quarterly transparency reports. They admit errors, explain fixes, and show how they improved. Users respect honesty more than flawless performance. A team that owns its mistakes builds more trust than one that pretends it never fails.

Trust and safety teams aren’t about censorship. They’re about survival - for users, for creators, and for the platforms themselves. The next time you see a video on an adult site and think "this is fine," remember: someone somewhere saw it first - and decided it was safe to stay.